I recently started my second (hopefully commercial) game with Defold. I decided I want to build the render script and camera system from scratch, so that I know exactly how they are working and I don’t have any lingering doubts about how to implement new features on top of built-in code.

I will release this system as an asset, similar to rendercam and orthographic. Before I do that, I would like to get some feedback on what features would be particularly useful that current camera assets do not support.

Here is what it currently supports:

- Orthographic (2D) projection

- Resize modes

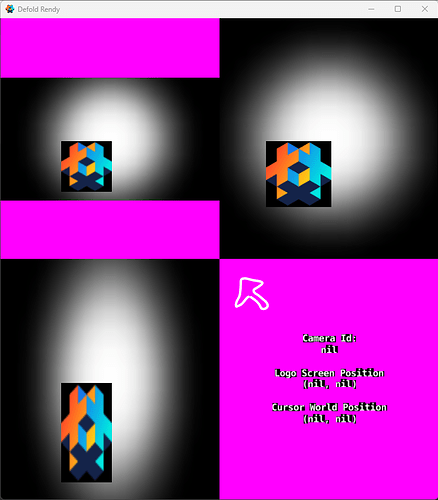

- Multiple cameras and viewports

Here is what I am currently implementing:

- Perspective (3D) projection

- Support for the default render predicates

Here is what is on the backlog:

- Camera shake

- Smooth zooming

- Screen-to-world conversion

- World-to-screen conversion

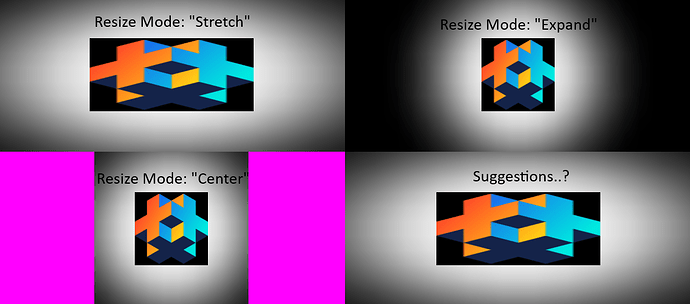

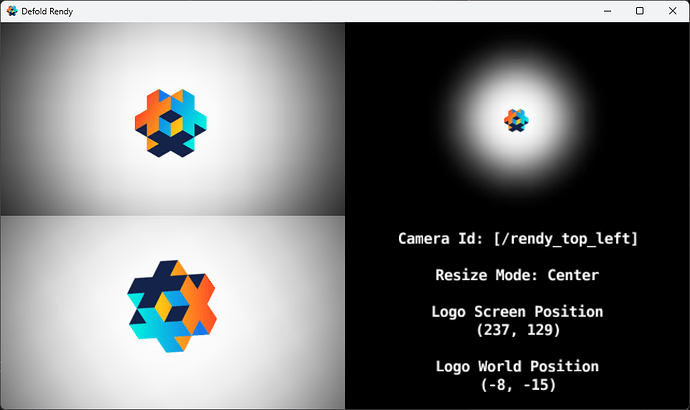

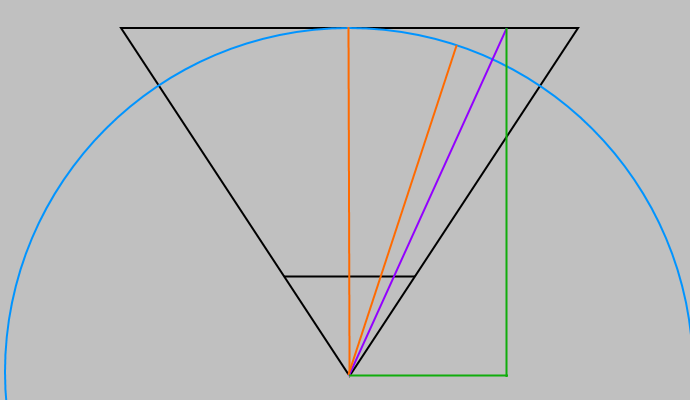

The following screenshot shows what I mean by resize modes:

In this example project, we have four cameras active simultaneously, each with their own viewport. A small Defold logo is in the center, and a large white particle image with an extensive black border is behind it. Magenta is the clear color, where no sprite exists.

- Stretch mode is similar to the built-in stretch projection.

- Expand mode shows more or less of the world as the window size changes.

- Center mode magnifies or shrinks the world according to the desired resolution / aspect ratio. It also centers the world in the camera’s viewport, which creates that well-known “black bar” effect (which in this case is actually a “magenta bar” effect).

Each camera can have its own resize mode, aspect ratio, etc.

Please let me know if any feature comes to mind that you’ve had to implement manually or think could be useful for Defold users.