I’ve made a post-processing shader to create a wave effect as part of my level complete celebration. It works by rendering the game to a texture, then rendering that to the screen while offsetting the texture coordinates along a sine wave for the underwater region (excluding the ‘Well Done’ message).

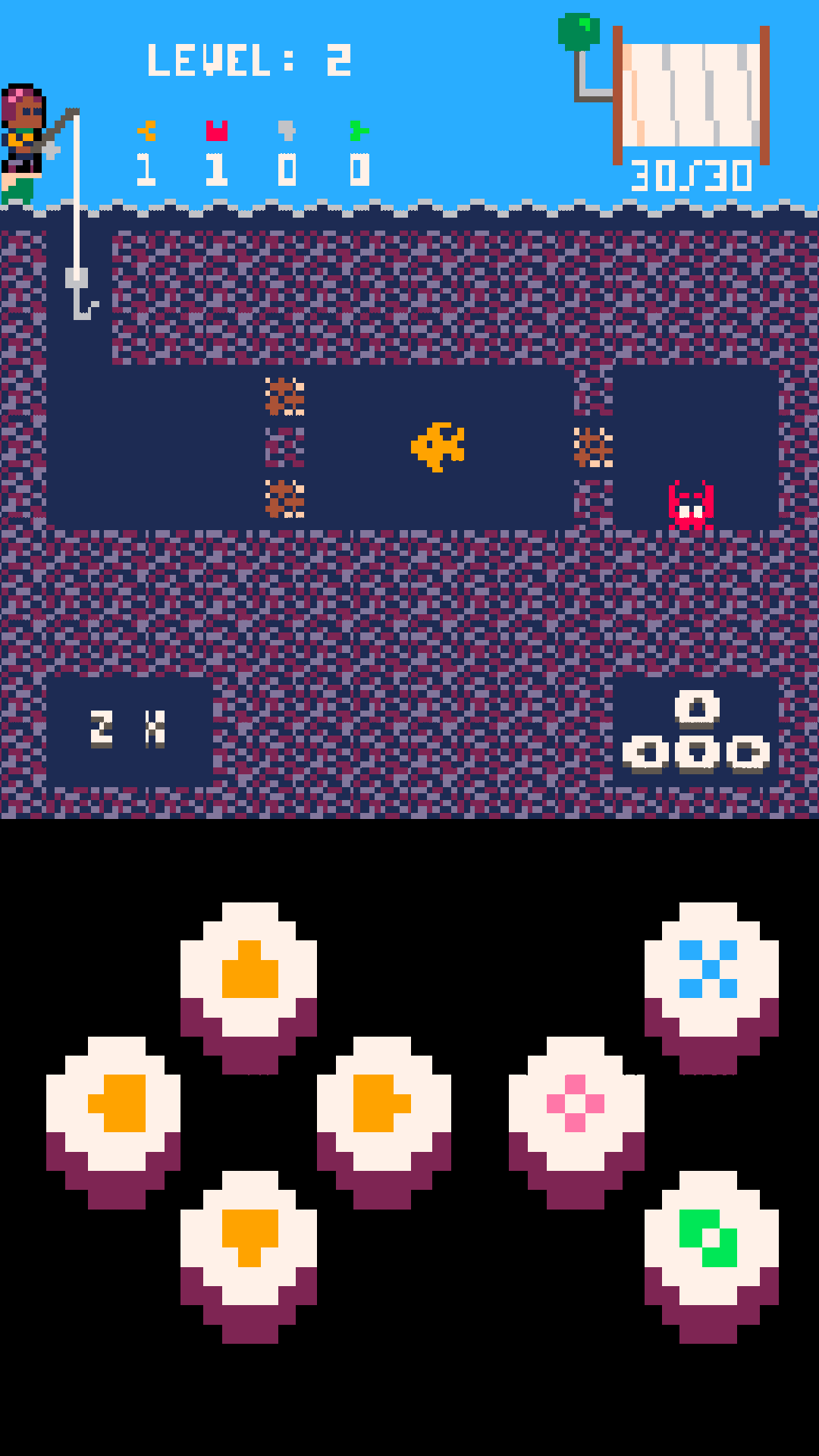

This works on Windows standalone and HTML5, but the texture coordinates on mobile (Android 4) get messed up doing any post-processing. Here I’m not using the wave effect, just rendering to a texture then rendering the texture to the screen:

The resolution of the image seems to be getting distorted? With the post-processing disabled the game renders the normal perfectly-square pixel-perfect 128x128 resolution even on mobile:

In the project settings the display width and height are set to 512x512 but the virtual resolution of my game is 128x128, so I’m doing some additional maths to make sure that each set of 4 actual pixel rows shift together as a single virtual pixel row, and that those shifts are always by a multiple of 4 actual pixel columns so that it still lines up with a virtual pixel column.

Here’s the vertex shader (not doing anything here, copied it from somewhere else, including just for completeness):

// view_proj and world are set as vertex constants and we

// access them in our vertex shader program by declaring them

// as "uniform".

uniform mediump mat4 view_proj;

uniform mediump mat4 world;

// position holds the original vertex position.

attribute mediump vec4 position;

// texcoord0 contains the texture coordinate for this vertex.

attribute mediump vec2 texcoord0;

// Define a varying that will interpolate the texture coordinates

// between the vertices. The fragment shader will read this value.

varying mediump vec2 var_texcoord0;

void main()

{

// Multiply view, projection and world matrices into one.

mediump mat4 mvp = view_proj * world;

// The varying var_texcoord0 is at texcoord0 at the position of

// this vertex.

var_texcoord0 = texcoord0;

// Translate the vertex position with the mvp matrix.

// The vec4(position.xyz, 1.0) ensures that the w component of

// the position is always 1.0.

gl_Position = mvp * vec4(position.xyz, 1.0);

}

And here’s my fragment shader:

varying mediump vec2 var_texcoord0;

uniform sampler2D target_texture;

uniform mediump vec4 scan_data;

void main()

{

// Y coords kinda make sense, X ones don't.

// Only shift coordinates outside of the 'Well Done' message region.

bool ok = var_texcoord0.x < 70.275 / 128.0 || var_texcoord0.x > 81.725 / 128.0;

ok = ok || var_texcoord0.y < 46.0 / 128.0 || var_texcoord0.y > 55.0 / 128.0;

bool enabled = scan_data.x != 0.0;

float time = scan_data.y;

float screen_width = scan_data.z;

float screen_height = scan_data.w; // Not used yet

float aspect = screen_height / screen_width; // Not used yet

// I have no idea why but I have to mess with the texture coordinates

// just to draw the render target to the screen normally.

vec2 uv = var_texcoord0;

uv.x += 16.0 / 512.0; // Have to shift by 16 pixels for some reason.

uv.x *= 4.0; // Also have to scale by 4 because otherwise we only see 32 virtual pixels??

// Now we're messing with the texture coords for the actual effect (only below water level).

if (enabled && var_texcoord0.y < 0.735 && ok)

{

// First we need to convert from our actual 512 pixel rows to our virtual 128 pixel rows.

float y = 512.0 * (1.0 - var_texcoord0.y);

y = float(int(y) / 4);

y = y / 128.0;

// Now sine-shift, making sure to stick to virtual resolution of 128 pixel columns.

float amplitude = 2.0;

float wavelength = 16.0;

float frequency = 4.0;

uv.x += floor(0.5 + amplitude * sin(y * wavelength + frequency * time)) / 128.0;

}

gl_FragColor = texture2D(target_texture, uv);

}

You’ll notice that I have to fudge the numbers a bit just to get it to render normally, I don’t know why. Then for the actual wave effect I shift the numbers in such a way that the virtual 128x128 resolution is still obeyed. The screen_width and screen_height passed in are the results of render.get_window_width() and render.get_window_height(). On standalone and HTML that’s 512x512 but on my phone it’s 1080x1920 - I’m guessing the problem is that my shader isn’t resolution-independent, but I don’t really understand why it’s getting distorted like it is or quite how to fix this ![]()

Basically there’s the actual window resolution (1080x1920), there’s the project-defined display resolution (512x512) and there’s my virtual resolution (128x128) and I need them all to play nicely. When the window res matches the display res it works fine.