I have no idea how to do that:)

Also lightmapper uv not exported)

Converting you mean? There are online converters, I never use those. For batch converting on the mac, I use xnconvert, for windows irfanview and for linux phatch. Plus, there is also ImageMagik and quite a few other programs. I am on a mac right now, xnconvert and also The Gimp can convert hdr to png, just tried it out. Not sure if the others load hrd files but would be surprised it they didn’t.

Ah, ok. It was just an idea - replace the hdr lightmapper produces with a png in the slight hope this might work.

After lightly reading your blender knowledge base document, I would say that this should work with the exporter with a couple of caveats (will test tomorrow):

- The PBR Material Shader must be selected for export

- The UV channels the lightmaps use must be the second channel, and a lightmap shader must be used in Defold to apply the correct output to the models.

I will look at this tomorrow, and I will provide a simple material for Defold that should support this. The only things Im not certain on, are the Normal formats and the Base Color formats (inputs into the BRDF Principled Shader). They need to be color RGBA/RGB or Texture map (png preferably).

Will post here tomorrow.

Ok. Some good news for all ![]()

After tinkering with lightmapper:

https://github.com/Naxela/The_Lightmapper

I have managed to develop a simple extension within Defender to support exporting baked lightmaps to defold. With this, a new release is available that can support this:

Heres the Blender scene sample:

And the scene exported directly into a Defold project:

To use this new feature a few things needs to happen properly.

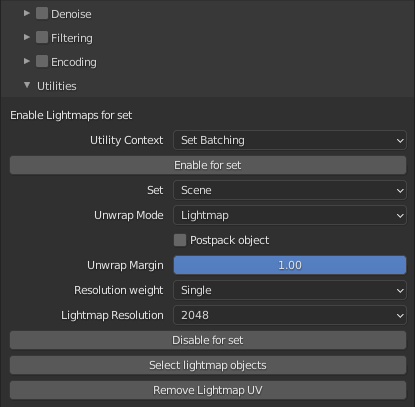

- LightMapper settings need to include the saving of baked lightmaps.

- Once baking for a scene is done, all material shaders nodes need to have the multiply node removed, and then the output of the lightmap attached to the Emission input for the Principled BSDF.

Example:

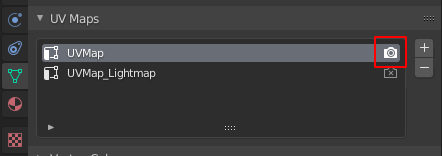

- Ensure that all base texture UV Channels are set to active.

Example (Cyan box shows render_active for the base texture):

- All materials that need to have a lightmap exported, the material name needs to be modified to end in “_LightMap”.

Example:

- Now export the scene as per normal.

LightMapper Settings:

Wow, awesome![]()

![]()

![]()

I will try in monday, big thanks ![]()

![]()

![]()

No probs. It isnt ‘ideal’ but it does seem to produce good results. I’m going to try a more complex scene and see how it goes. It should handle most scenes if the materials are setup with default Principled BSDF’s.

If you have any issues, let me know. It even seems to work with default HDR files (they are auto converted by blender to png for Defold).

Oh. Additional notes about light levels etc. In Defold you can tweak the lightmap material params variable x,y,z and w. They do the following:

x: Changes the Ambient light level

y: Sets the Albedo level (diffuse texture level)

z: Sets the Specular level

w: Sets an offset level for the light map. Ie if you set it to 0, the shadows (if black) will result in black, if set to 0.5 then a shadow (that is black) will appear 50% brighter.

These are set here:

A more complicated scene (number of objects and shading):

Blender:

Defold:

Runtime Defold:

The shadow map is fairly low res (thats why its quite noisy) but it looks pretty good. Might try some high res scenes and see how it turns out.

Note: This takes a while to to because each material needs to have its name modified, the multiply removed and inputs relinked. I might try to see if I can make a little script that can do this as part of the export process. It would be nice to have this completely automated.

Try to export

Scene looks broken:(

What i am doing wrong?

Blender file: https://drive.google.com/file/d/17dKP-Lne0kj6AiYVTh3T2r-IdSG5frIb/view?usp=sharing

1)Lightmap textures png not opened.

–looks like file broken:(

2)I try to save hdr files to png from photoshop.

looks like uv not exported(

Mb because i use base color?

сube.buffer

[

{

"name": "position",

"type": "float32",

"count": 3,

"data": [

1,1,1.963974237442,-1,1,1.963974237442,-1,-1,1.963974237442,1,1,1.963974237442,-1,-1,1.963974237442,1,-1,1.963974237442,1,-1,-1,1,-1,1.963974237442,-1,-1,1.963974237442,1,-1,-1,-1,-1,1.963974237442,-1,-1,-1,-1,-1,-1,-1,-1,1.963974237442,-1,1,1.963974237442,-1,-1,-1,-1,1,1.963974237442,-1,1,-1,-1,1,-1,1,1,-1,1,-1,-1,-1,1,-1,1,-1,-1,-1,-1,-1,1,1,-1,1,1,1.963974237442,1,-1,1.963974237442,1,1,-1,1,-1,1.963974237442,1,-1,-1,-1,1,-1,-1,1,1.963974237442,1,1,1.963974237442,-1,1,-1,1,1,1.963974237442,1,1,-1

]

},

{

"name": "normal",

"type": "float32",

"count": 3,

"data": [

0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,-0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,0.57735031843185,-0.57735031843185

]

},

{

"name": "texcoord0",

"type": "float32",

"count": 2,

"data": [

0.625,0.5,0.875,0.5,0.875,0.75,0.625,0.5,0.875,0.75,0.625,0.75,0.375,0.75,0.625,0.75,0.625,1,0.375,0.75,0.625,1,0.375,1,0.375,0,0.625,0,0.625,0.25,0.375,0,0.625,0.25,0.375,0.25,0.125,0.5,0.375,0.5,0.375,0.75,0.125,0.5,0.375,0.75,0.125,0.75,0.375,0.5,0.625,0.5,0.625,0.75,0.375,0.5,0.625,0.75,0.375,0.75,0.375,0.25,0.625,0.25,0.625,0.5,0.375,0.25,0.625,0.5,0.375,0.5

]

}

,{

"name": "texcoord1",

"type": "float32",

"count": 2,

"data": [

]

}

]

Ok. Check your channels in Blender. You need two channels for a material. One should be the base texture (before you apply LightMapper) and one should be the Lightmap UVMap channel.

Sometimes I found Lightmapper makes temp channels, you can safely delete them. It will only work with two UV channels.

Also check the channel list for each object:

The base texture UV must be the render_active one. The UV lightmap channel must be the deactivated one.

Im going to improve this whole process because its kind of intertwined with previous code. Let me know how the above turns out.

If you go to the github repo, there is a sample lightmap test blend file in the test folder to examine.

Note: Much of this is about trying to support both LightMapper (which is an ‘ok’ tool) and normal Baked lightmaps, which can be a bit better manually tweaked.

Thanks UVMap is enabled.

1)Scene use pbr-simple.material

So looks like it not import corrected.

2)_LightMap added to material name.

3)In material i do not use first uv. I think that it problem:)

-i will try to add uv and texture instead of base_color

This setup is fine. I dont actually often add the uvmap as a source input - but you can if you want into the shader nodes. When you use color like this, it wont matter, just make a channel (hit the + button) and make it active.

I’ll add some code to detect this (color vs colormap). The thing is, when I export the color actually becomes a color map ![]()

Oh. Also, if the results look a little weird in the lightmap, you may need to switch the lightmap node Color Space from Raw to SRGB - but again, Ive found it unnecessary for most tests.

Ohh, sorry forgot all my posts.

Look like plugin not updated to 1.3:))

Import plugin again it again;)

Import scene again.

Worked

Awesome. Glad it worked. Im definitely going to improve it this week so its a little more friendly. The adding material name and other bits are a bit confusing. Id like to get that all removed, so you run LightMapper, the tool detects it, and just adds the lightmap material in Defold.

Should get some time on it Wednesday. Thanks for trying it out and leaving feedback. Really helps with improving the tool.

Problems with lightmap textures.

When i sync scene it create png files that can’t opened:(

Need manualy convert hdr to png in photoshop

Looks like plugin copy hdr file and change name to png.

Yeah I had that happen in one test I did. Try changing the ColorSpace type in the baked shader node, or just load the temp baked png into the node.

The exporter should always convert other image types to png, but hdr does some funny things. And fails inconsistently.

New release:

I have modified the exporter to be able to support native exporting of the LightMapper tool.

Additionally I made the requirements for material support for lightmaps a fair bit simpler. And the hdr texture conversion has now been fixed. hdr maps are converted to png correctly.

Example of a more complex scene in Blender:

The import into Defold with only one fix (scaling on the floor mat was modified in the X axis - it was negative?)

Thanks now it is much easy:)

1)Plugin version in blender 1.3.0. Looks like you forgot to increase version:)

2)A need to remove multiply node manually as before? Because if i not remove multiply object texture not exported to defold.

3)When i use your pbr shader i get strange behavior. Color is very different when i rotate camera.

4)I do not need pbr render. If i need only texture color and shadow. This is correct shader? i removed all except emmisive and albedo

// Original work by Martia A Saunders

// https://dominium.maksw.com/articles/physically-based-rendering-pbr/pbr-part-one/

// uniform samplerCube cubeMap ;

uniform sampler2D emissiveMap ;

uniform sampler2D albedoMap ;

uniform vec4 tint;

uniform vec4 params;

varying vec3 vvLocalSurfaceNormal ;

varying vec3 vvLocalSurfaceToLightDirection;

varying vec3 vvLocalReflectedSurfaceToViewerDirection;

varying vec2 vuvCoord0 ;

varying vec2 vuvCoord1 ;

varying vec3 vvLocalSurfaceToViewerDirection;

varying vec2 vN;

const float cpi = 3.14159265358979323846264338327950288419716939937510f ;

float computeFresnelTerm(float fZero, vec3 vSurfaceToViewerDirection, vec3 vSurfaceNormal)

{

float baseValue = 1.0 - dot(vSurfaceToViewerDirection, vSurfaceNormal);

float exponential = pow(baseValue, 5.0) ;

float fresnel = exponential + fZero * (1.0 - exponential) ;

return fresnel ;

}

float chiGGX(float f)

{

return f > 0.0 ? 1.0 : 0.0 ;

}

// APPROVED! Works as expected

float computeGGXDistribution(vec3 vSurfaceNormal, vec3 vSurfaceToLightDirection, float fRoughness)

{

float fNormalDotLight = clamp(dot(vSurfaceNormal, vSurfaceToLightDirection), 0.0, 1.0) ;

float fNormalDotLightSquared = fNormalDotLight * fNormalDotLight ;

float fRoughnessSquared = fRoughness * fRoughness ;

float fDen = fNormalDotLightSquared * fRoughnessSquared + (1.0 - fNormalDotLightSquared);

return clamp((chiGGX(fNormalDotLight) * fRoughnessSquared) / (cpi * fDen * fDen), 0.0, 1.0);

}

float computeGGXPartialGeometryTerm(vec3 vSurfaceToViewerDirection, vec3 vSurfaceNormal, vec3 vLightViewHalfVector, float fRoughness)

{

float fViewerDotLightViewHalf = clamp(dot(vSurfaceToViewerDirection, vLightViewHalfVector), 0.0, 1.0) ;

float fChi = chiGGX(fViewerDotLightViewHalf / clamp(dot(vSurfaceToViewerDirection, vSurfaceNormal), 0.0, 1.0));

fViewerDotLightViewHalf *= fViewerDotLightViewHalf;

float fTan2 = (1.0 - fViewerDotLightViewHalf) / fViewerDotLightViewHalf;

return (fChi * 2.0) / (1.0 + sqrt(1.0 + fRoughness * fRoughness * fTan2)) ;

}

void main()

{

//vec3 mappedNormal = normalize(texture(normalMap, vuvCoord0).xyz);

// vec3 vNormalisedLocalSurfaceNormal = normalize((vvLocalSurfaceNormal + mappedNormal) * 0.5) ;

// vec3 vNormalisedLocalSurfaceToLightDirection = normalize(vvLocalSurfaceToLightDirection) ;

// vec3 vNormalisedLocalReflectedSurfaceToViewerDirection = normalize(vvLocalReflectedSurfaceToViewerDirection) ;

// vec3 vNormalisedLocalSurfaceToViewerDirection = normalize(vvLocalSurfaceToViewerDirection) ;

// vec3 vLocalLightViewHalfVector = normalize(vNormalisedLocalSurfaceToLightDirection + vNormalisedLocalSurfaceToViewerDirection) ;

// float fLightIntensity = max(dot(vNormalisedLocalSurfaceToLightDirection, vNormalisedLocalSurfaceNormal), 0.0) ;

//

// vec4 amrtex = texture(aoMetallicRoughnessMap, vuvCoord0);

// float fMetalness = amrtex.g ;

// float fRoughness = max(0.001, amrtex.b ) ;

// float distributionMicroFacet = computeGGXDistribution(vNormalisedLocalSurfaceNormal, vNormalisedLocalSurfaceToLightDirection, fRoughness) ;

// float geometryMicroFacet = computeGGXPartialGeometryTerm(vNormalisedLocalSurfaceToViewerDirection, vNormalisedLocalSurfaceNormal, vLocalLightViewHalfVector, fRoughness) ;

// float microFacetContribution = distributionMicroFacet * geometryMicroFacet ;

// float fLightSourceFresnelTerm = computeFresnelTerm(0.5, vNormalisedLocalSurfaceToViewerDirection, vNormalisedLocalSurfaceNormal) ;

vec4 rgbAlbedo = texture(albedoMap, vuvCoord0) * tint * params.y;

vec3 rgbEmissive = texture(emissiveMap, vuvCoord1).rgb;

// vec3 rgbFragment = rgbAlbedo.rgb * (1.0 - fMetalness);

vec3 rgbFragment = rgbAlbedo.rgb;

// vec3 rgbSourceReflection = texture2D( reflectionMap, vN ).rgb * fRoughness;

// vec3 rgbReflection = rgbSourceReflection ;

// rgbReflection *= rgbAlbedo.rgb * fMetalness ;

// rgbReflection *= fLightSourceFresnelTerm ;

// rgbReflection = min(rgbReflection, rgbSourceReflection) ; // conservation of energy

// vec3 rgbSpecular = vec3(0.0) ;

// if (fLightIntensity > 0.0)

// {

// rgbSpecular = vec3(params.z) ;

// rgbSpecular *= microFacetContribution * fLightSourceFresnelTerm ;

// rgbSpecular = min(vec3(1.0), rgbSpecular) ; // conservation of energy

// }

float ambientLevel = 0.5 * (1.0 - params.x) + params.x;

// rgbFragment += rgbSpecular;

rgbFragment *= ambientLevel;

// rgbFragment += rgbReflection ;

// rgbFragment.r = mix(rgbFragment.r,rgbEmissive.r,0.5);

// rgbFragment.g = mix(rgbFragment.g,rgbEmissive.g,0.5);

/// rgbFragment.b = mix(rgbFragment.b,rgbEmissive.b,0.5);

rgbFragment += vec3(rgbEmissive.r + params.w, rgbEmissive.g + params.w, rgbEmissive.b + params.w) ;

// rgbFragment *= amrtex.r;

gl_FragColor = vec4(rgbFragment, rgbAlbedo.a);

}

5)One image no rgbEmissive.

Second image with rgbEmissive.

Maybe it possible to make it look not gray? I am noob it 3d shaders so i have no idea how to work with albedo and emissiv

Thanks:)

Hi, glad its working (sort of? ) ![]()

- Yeah I just checked that, looks like I might not have uploaded the latest build. I’ll rectify this once I have fixed a couple of bugs I have found (Probably on Saturday).

- You shouldnt need to remove the multiply - but this might be because of 1)

- This is actually related to the specular. If you set the Z param in the material to 0 or < 0.1 then this should be reduced.

- The PBR render material is overkill if you are doing colour non metallic and roughness rendering.

I’ll add a simple one here if you want. Which uses just the second UV channel, and more like the model material. - The grey is the params variable in the material again

the X and W values in params controls how much impact the diffuse and emissive has on the output color. If you increase the X value to say 0.5 or higher and reduce the W value to 0.1 or 0.0 or even somewhere inbetween (you can go negative too) you should be able to find a suitable look.

the X and W values in params controls how much impact the diffuse and emissive has on the output color. If you increase the X value to say 0.5 or higher and reduce the W value to 0.1 or 0.0 or even somewhere inbetween (you can go negative too) you should be able to find a suitable look.

heres the property Im referring to:

Thanks:)

![]()

Yes, please add simple one